Objective

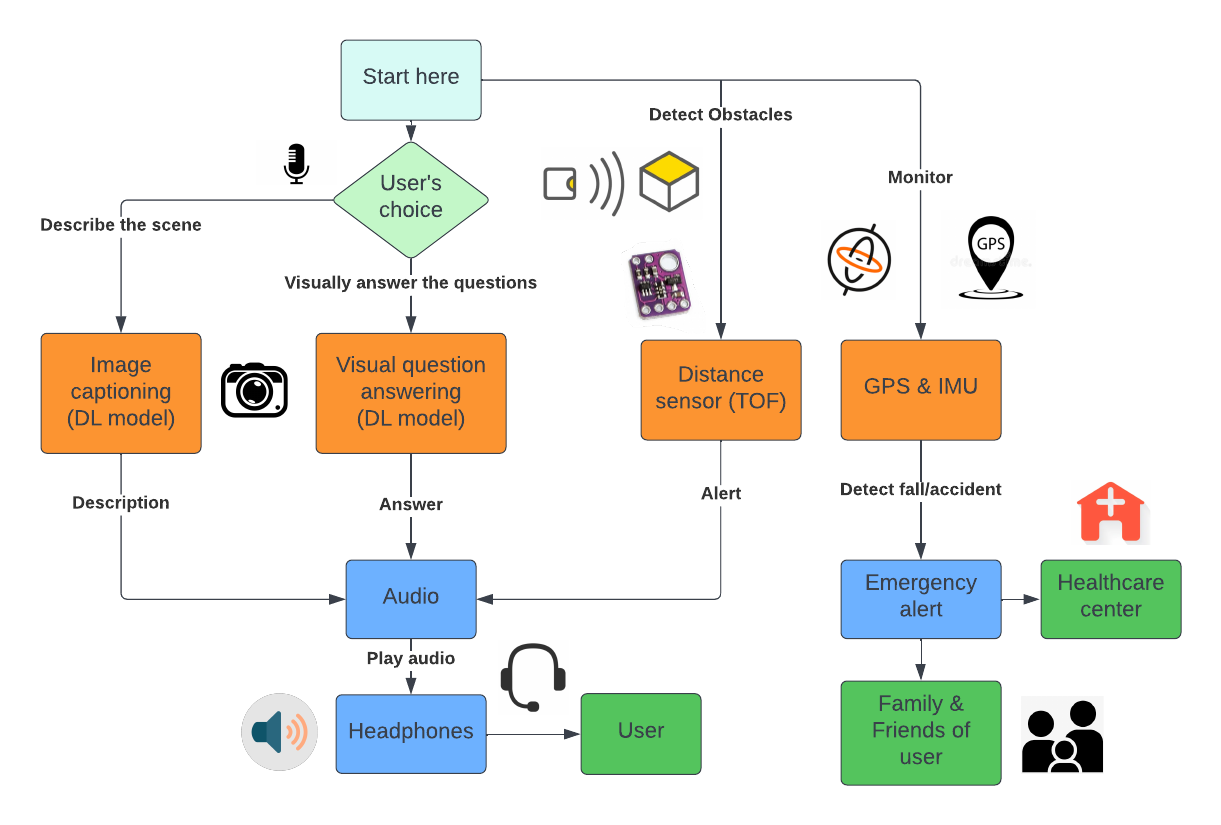

As the primary mentor for this project, our goal was to cater to the needs of visually impaired individuals by creating a wearable, cost-effective device that provides audio instructions based on the user's surroundings.Tech Stack

- Deep Learning Models: CLIP and LXMERT

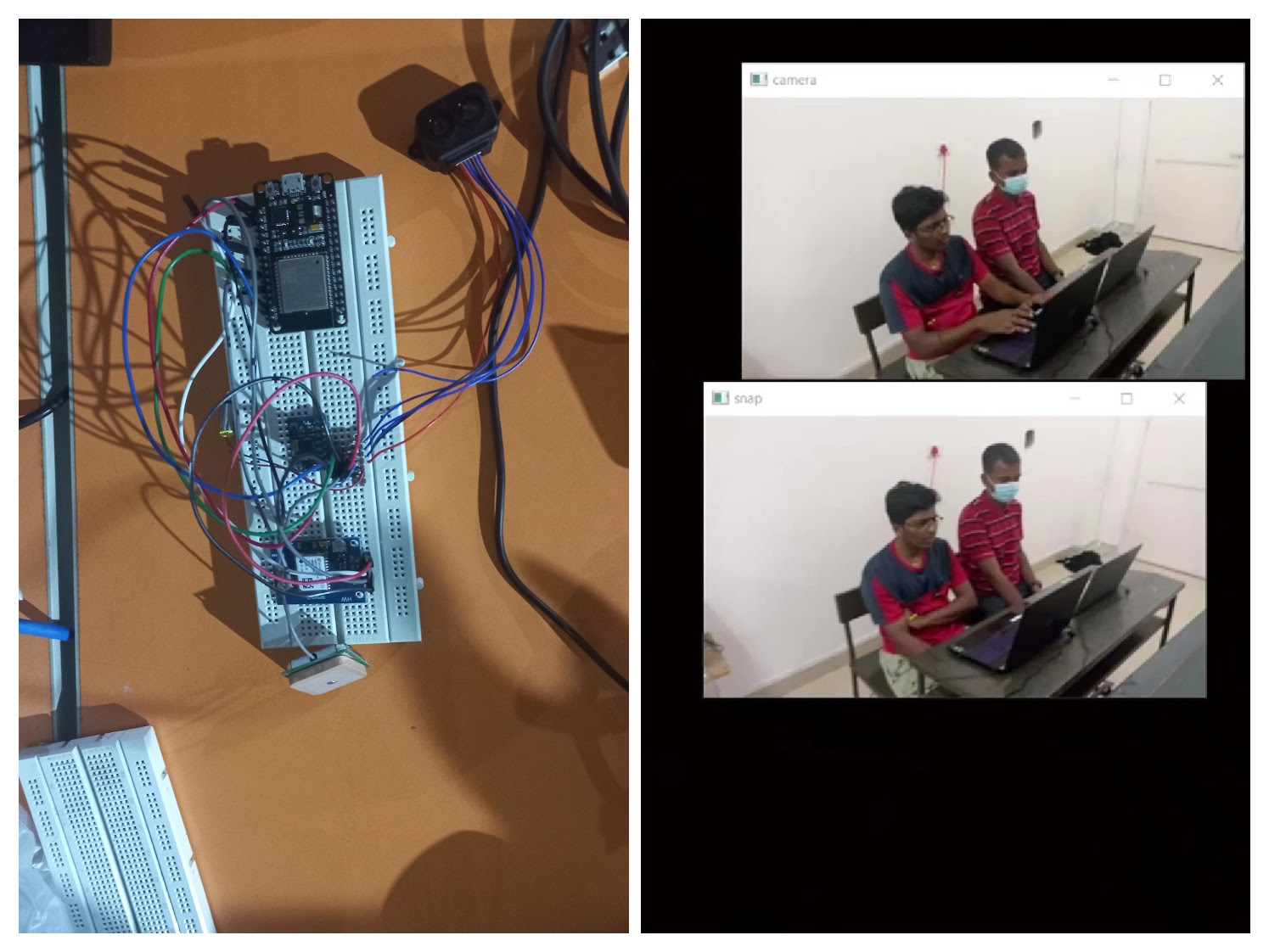

- Computation Platforms: Raspberry Pi, Jetson Nano

- Sensors: Time-of-flight-based LIDAR, MPU6050

- Safety Features: Fall detection, GPS tracking, Obstacle detection

- Power Source: Li-Po Battery

Key Features

- Wearable prototype offering audio instructions for enhanced user understanding.

- Utilizes CLIP and LXMERT deep learning models for image captioning and visual question answering.

- Wireless camera on the headband captures images, processed on Raspberry Pi or Jetson Nano.

- Safety features include fall detection via MPU6050 and obstacle detection through LIDAR.

- Vibration feedback from haptic motors helps users navigate obstacles effectively.

- GPS tracking for location-based services and emergency contacts notified in case of a fall.

This device not only addresses the accessibility needs of the visually impaired but does so with an emphasis on safety and affordability. The combination of cutting-edge technology and thoughtful design makes it a promising solution in assistive technology.