Objective

As a dedicated member of this project, I played a crucial role in developing Pepper, a versatile personal assistant platform utilizing various artificial intelligence algorithms.

Technology Stack

- SLAM

- Microsoft Kinect

- ROS Gazebo

- Deep Learning

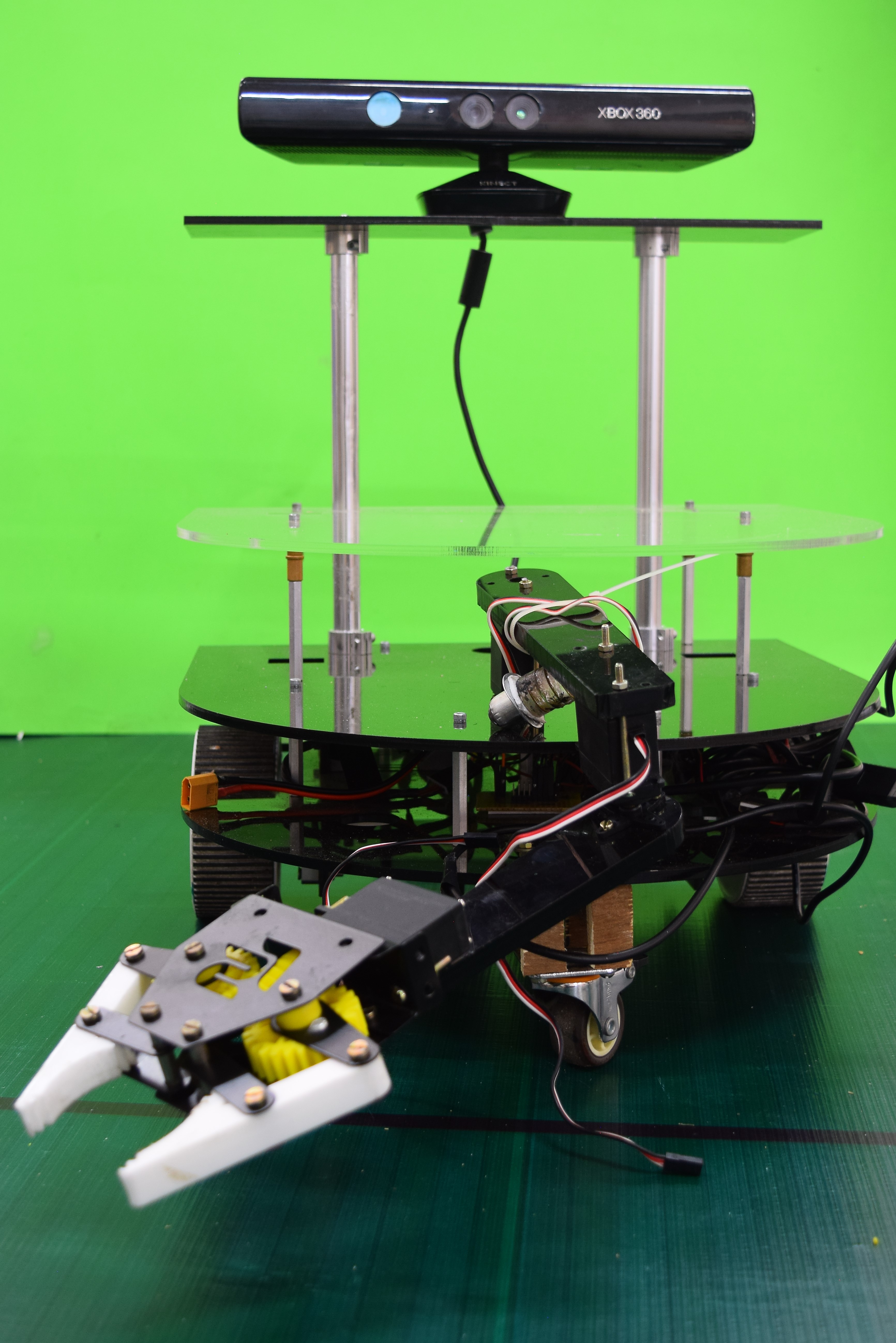

Robot Design

The robot's chassis, designed using Autocad and fabricated with acrylic, features three layers housing components such as the battery, driver, gripper arm, and onboard computer. Microsoft Kinect is mounted on the top pedestal, while high-torque motors and a castor wheel ensure stable movement.Mapping

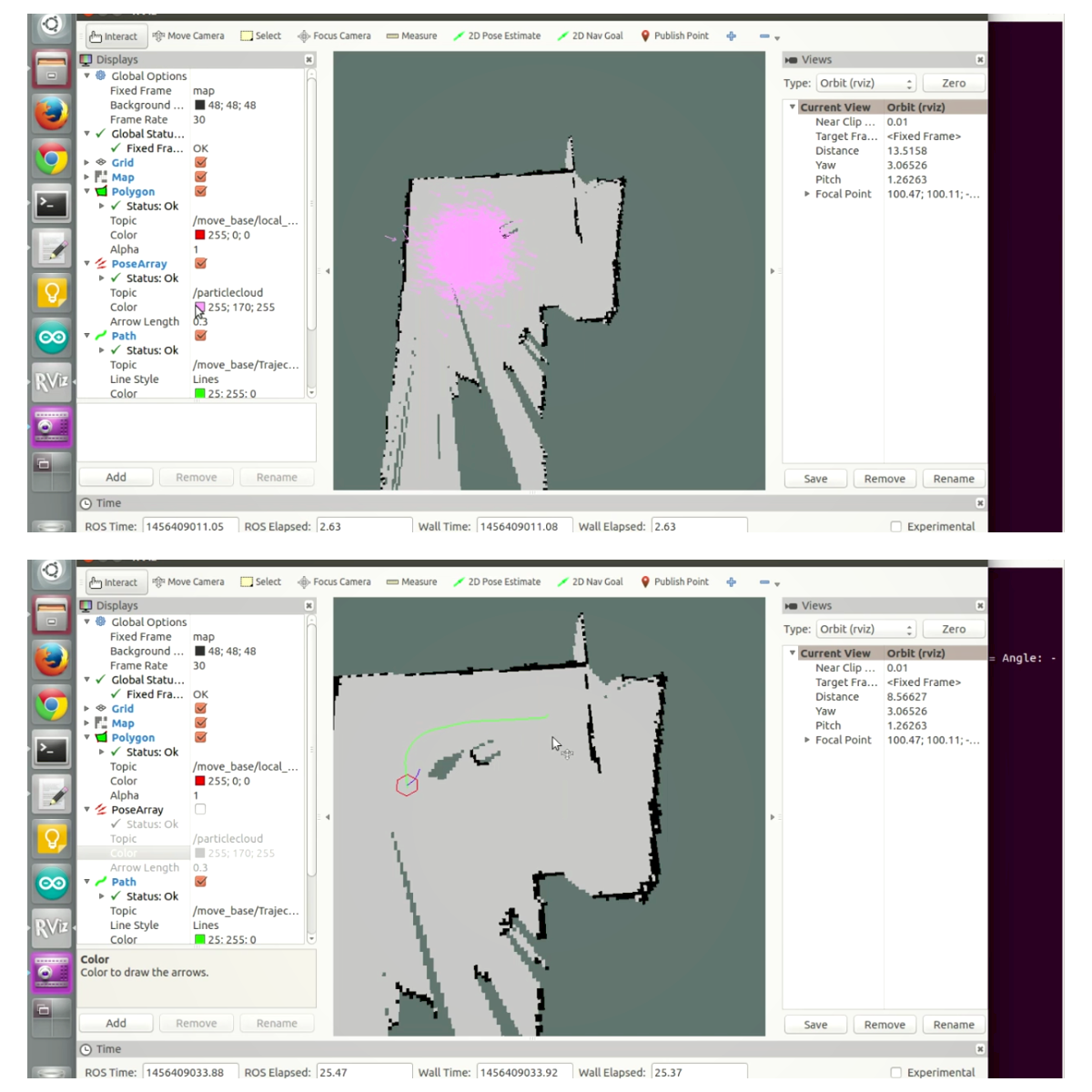

Pepper optimally navigates its environment by creating a map using the onboard Kinect sensor and the gmapping ROS package, employing the Simultaneous Localisation and Mapping Algorithm (SLAM).Localisation

To address wheel drift, we implemented the Kalman filter algorithm, combining data from multiple sensors, including encoders and a gyroscope, for accurate pose estimation.Speech Recognition

Utilizing a text-independent machine learning algorithm, Pepper identifies speakers through voice samples, employing Mel Frequency Cepstral Coefficient (MFCC) Vectors for training and real-time recognition.Object Recognition and Pick up

Trained with a Convolutional Neural Network (CNN) on 1000 object classes, Pepper captures and measures object depth using Kinect. The robotic arm, equipped with 3 degrees of freedom, approaches objects based on depth, and the gripper, with 1 DoF, picks up recognized objects.This project seamlessly integrates SLAM, Microsoft Kinect, ROS Gazebo, and Deep Learning to empower Pepper as an advanced personal assistant, capable of efficient navigation, speech recognition, and intelligent object interaction.