Objective

As the mentor for this project, I provided guidance in developing a system for creating editable 3D models of real-world objects using a minimal set of images, with a focus on leveraging Structure from Motion and Neural Radiance Fields.Technology Stack

- NeRF

- Tensorflow

- Pytorch

- Deep Learning

Key Features

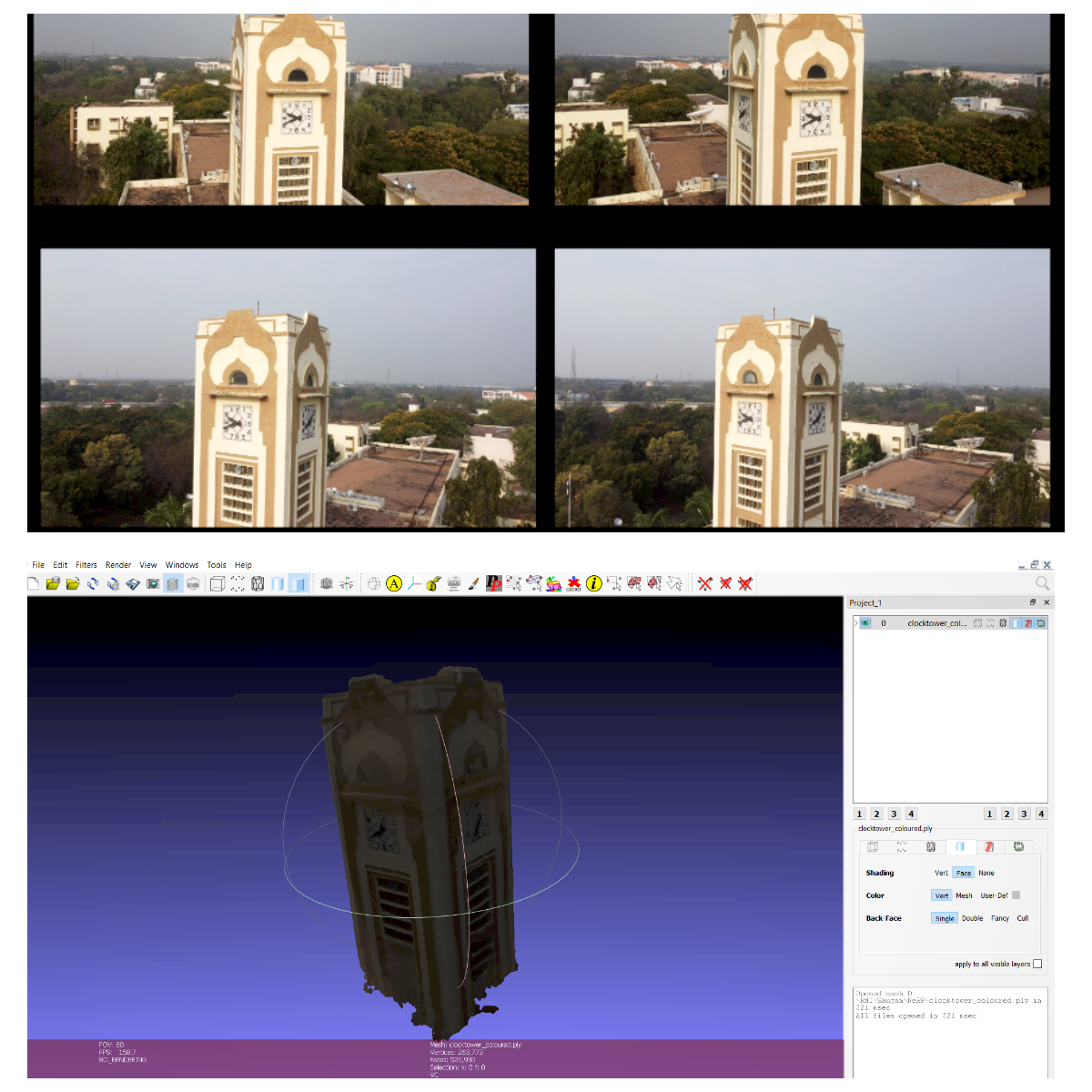

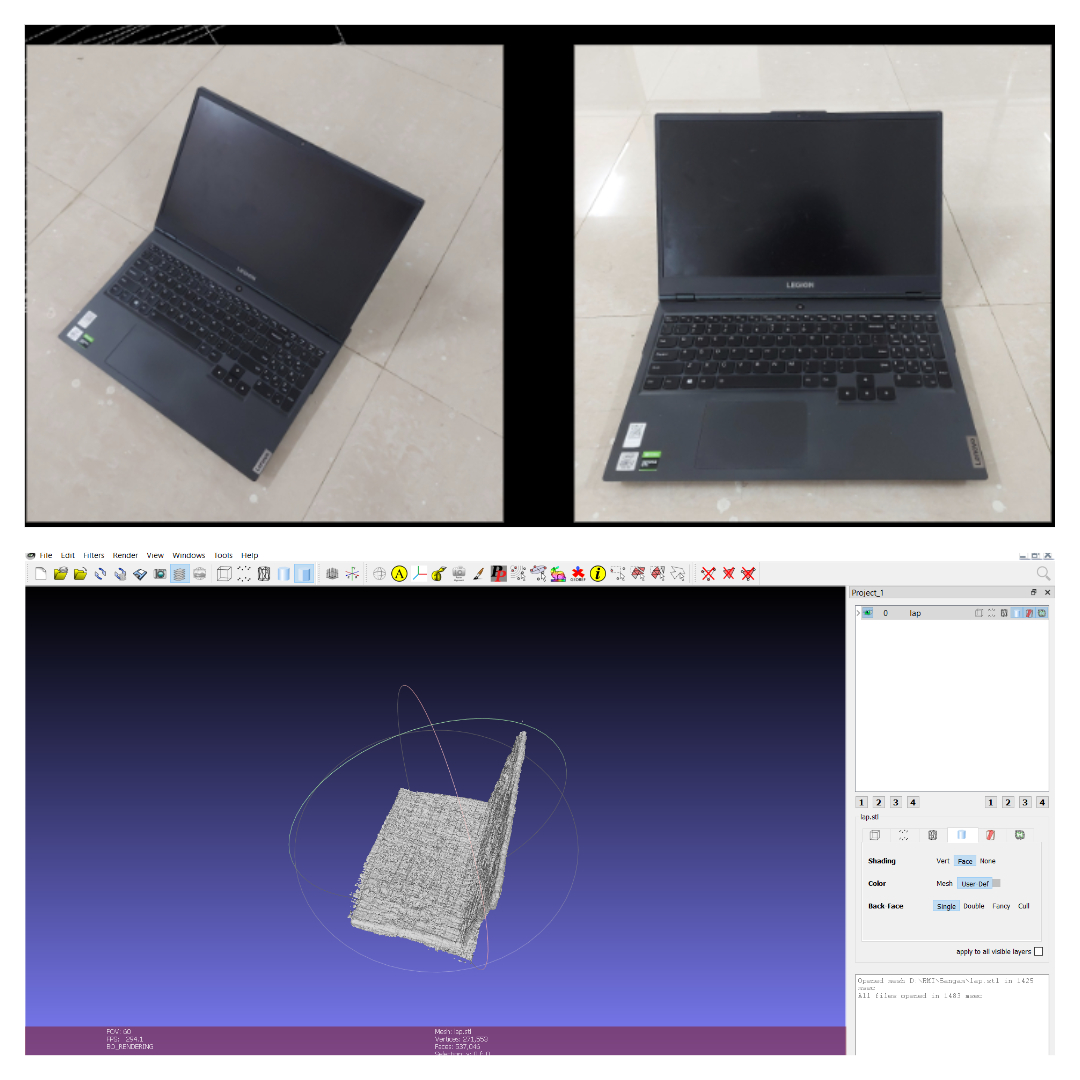

The project aims to revolutionize 3D reconstruction in virtual space, finding applications in scene rendering and self-driving cars. Key features include:- User-Input Image Processing: Users provide images of the object, initiating the process.

- Structure from Motion (SfM): Determines the camera’s relative position from the object, calculating relative poses.

- Neural Radiance Fields (NeRF): Utilizes deep learning concepts to output RGB color and opacity alpha of each voxel in 3D space.

- Rendering Equation: Integrates NeRF output to render the item’s volume in the 3D space.

- Editable 3D Models: Creates editable 3D models from limited input images.

This project, powered by NeRF, Tensorflow, Pytorch, and Deep Learning, stands at the forefront of 3D reconstruction technology, offering a versatile solution for generating detailed and editable 3D models from minimal visual input.